- Please remember the below list of words in your mind before getting into the Session.

- hyper-plane.

- Margins.

- Support Vectors.

- Kernal.

- SVM = Support Vector Machine.

- SVR = Support Vector Regression

- Hi Welcome to 4'th part of Regression models - Support Vector Regression[SVR] which is sub class of a familiar ML-Model Support Vector Machine[SVM].

- I recommend you to look in to SVM-Support Vector Machine intution to get more understanding on SVR.

- The Support-Vector algorithm is a nonlinear generalization of the Generalized Portrait algorithm, it is firmly grounded in the framework of statistical learning theory, or VC theory.

- In a nutshell, VC theory characterizes properties of learning machines which enable them to generalize well to unseen data.

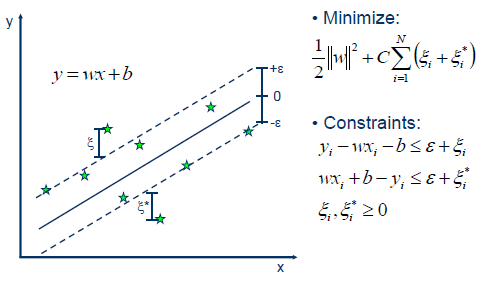

- Our goal is to find a function f(x) that has at most ε deviation from the actually obtained targets yi for all the training data, and at the same time is as flat as possible.

- It is considered a non parametric technique because it relies on kernel functions.

- In other words, we do not care about errors as long as they are less than ε, but will not accept any deviation larger than this

- The key difference between SVM and SVR is that SVM works with non-linear(Discrete) data in the other hand SVR works with Linear(continuous) data.

- When it comes to SVR the following terms used very frequently

- Kernal:

- The function which is used to map lower dimentional data into a higher dimentional data.

- Generally it could be linear, polynomial, sigmoid and rbf.

- This is known as Kernel-trick.

- Support Vectors:

- Actual data-points which are close to line of boundary.

- The distance between data-point and boundary line

- Hyper Plane:

- In SVM, Hyper Plane is the important factor which separates the data classes, on we can say it the line between data classes.

- In SVR, Hyper Plane will play the same role as SVM, but as we discussed before the key change is, here it is a line between linear(continuous) data.

- We can consider this as a best fit line predicted by our kernal.

- Boundary Plane or Decision Boundary(margin of tolerance):

- The name Boundary itself defines its usage, yes it is going to define the upper/lower boundaries from the Hyper-Plane.

- It act as a margin between Hyper-Plane and data points. One interesting factor is The support vectors can be on the Boundary line or outside boundary line.

- Here the distance between Boundary-Plane and Hyper-Plane are considered as epsilon(ε) distance. So the lines that we draw are at ‘+ε’ and ‘-ε ’ distance from Hyper Plane.

- Below image shows the above points discussed.

- Kernal:

Ref: http://www.saedsayad.com/support_vector_machine_reg.htm

- Why SVR and how it differs from Linear Regression ?

- In Normal Linear Regression model's like (SLR, MLR and Poly), we tried to mimize the error rate and we derived our best fit line.

- In SVR, we tried to fit the error within the boundary threshold, so we can get more accuracy and better prediction in our model.

- How to Write your first program in Python

- If you completed the above step, Congrats you have created your SVR machine learning model using Python.

- Create a Machine Learning Model to predict Car Price and compare your results with Multi-Linear and Polynomial results.[Car Price Prediction]

- Create a Machine Learning Model to predict Air Quality.[Air Quality]

- Create a Machine Learning model to predict ERP-estimated relative performance of a computer and compare it with Multi-Linear and Polynomial results.[ERP Prediction]

- Create a Machine Learning model to predict Wine Quality using SVR.[Wine Quality]

Use Cases reference

SVM - Support Vector Machine

How Does SVR Works

how does support vector regression work intuitively