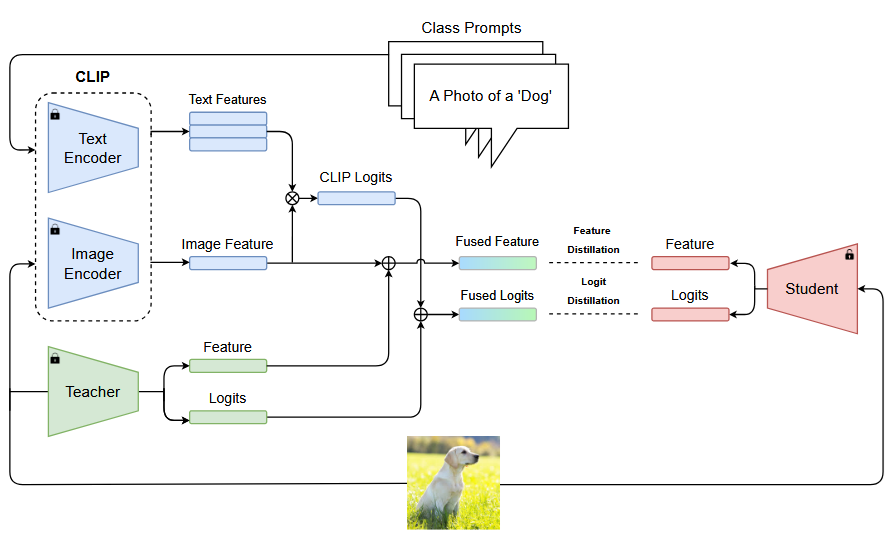

The source code of (Enriching Knowledge Distillation with Cross-Modal Teacher Fusion).

Also, see our other works:

- Attention as Geometric Transformation: Revisiting Feature Distillation for Semantic Segmentation [WACV'26]

- A Comprehensive Survey on Knowledge Distillation [TMLR'25]

- Adaptive Inter-Class Similarity Distillation for Semantic Segmentation [MTAP'25]

Environments:

- Python 3.8

- PyTorch 1.7.0

Install the package:

sudo pip3 install -r requirements.txt

sudo python setup.py develop

pip install git+https://github.com/openai/CLIP.git

pip install ftfy regex tqdm- Download the CIFAR-100 dataset and put it in

./data. - Download the cifar_teachers.tar and untar it to

./download_ckptsviatar xvf cifar_teachers.tar. - Cache the features and logits of the CLIP using

cache.pyfile. It saves the features and logits in./clip_cache.

After doing above steps, train the student using:

# KD

python tools/train.py --cfg configs/cifar100/kd/resnet32x4_resnet8x4.yaml

# RichKD (L)

python tools/train.py --cfg configs/cifar100/richkd/richkd_L.yaml

# RichKD (F)

python tools/train.py --cfg configs/cifar100/richkd/richkd_F.yaml

# RichKD (L+F)

python tools/train.py --cfg configs/cifar100/richkd/richkd.yamlIf you use this repository for your research or wish to refer to our distillation method, please use the following BibTeX entries:

@article{mansourian2025enriching,

title={Enriching Knowledge Distillation with Cross-Modal Teacher Fusion},

author={Mansourian, Amir M and Babaei, Amir Mohammad and Kasaei, Shohreh},

journal={arXiv preprint arXiv:2511.09286},

year={2025}

}

@inproceedings{mansourian2026attention,

title={Attention as Geometric Transformation: Revisiting Feature Distillation for Semantic Segmentation},

author={Mansourian, Amirmohammad and Jalali, Arya and Ahmadi, Rozhan and Kasaei, Shohreh},

booktitle={Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision},

pages={1287--1297},

year={2026}

}

@article{mansourian2025a,

title={A Comprehensive Survey on Knowledge Distillation},

author={Amir M. Mansourian and Rozhan Ahmadi and Masoud Ghafouri and Amir Mohammad Babaei and Elaheh Badali Golezani and Zeynab yasamani ghamchi and Vida Ramezanian and Alireza Taherian and Kimia Dinashi and Amirali Miri and Shohreh Kasaei},

journal={Transactions on Machine Learning Research},

issn={2835-8856},

year={2025}

}

@article{mansourian2025aicsd,

title={AICSD: Adaptive inter-class similarity distillation for semantic segmentation},

author={Mansourian, Amir M and Ahamdi, Rozhan and Kasaei, Shohreh},

journal={Multimedia Tools and Applications},

pages={1--20},

year={2025},

publisher={Springer}

}This codebase is heavily borrowed from Logit Standardization in Knowledge Distillation. Thanks for their excellent work.